Original Title: Why Does Everyone Hate AI?

Original Compilation: SpecialistXBT, BlockBeats

Editor’s Note: The domestic Openclaw frenzy has brought AI Agents into the lives of ordinary people. Within the venture capital circle, new model breakthroughs, new financing myths, and grand narratives about AI reshaping the world emerge almost every few weeks. However, in stark contrast to the enthusiasm in the tech and investment circles, the general public’s sentiment towards AI is far less optimistic. A noticeable anti-AI sentiment is spreading. Why does a technology hailed as the “next industrial revolution” simultaneously provoke such intense aversion and hostility? This article attempts to explain this paradox of public sentiment in the AI era from three dimensions: technological history, economic mood, and cultural psychology.

If you want to get a feel for the current zeitgeist, there’s one place particularly worth checking out: the comment sections on TikTok. When you start reading TikTok comments, you’ll notice one sentiment over and over again: a sharp, intense, almost instinctive hatred for AI.

Here are some comments I pulled from under a video last night:

The vibe… is not great.

I’ve been thinking about this a lot lately. My column, *Digital Native*, is a publication focused on the intersection of people and technology. And right now, people seem to really despise the most important technology of our time. Clearly, this tension presents a challenge: it’s hard for AI to achieve mass adoption when many people outright refuse to use it.

Someone asked me the other day how many times a day I use ChatGPT. I said I’ve never used it, and they were shocked. I’ll keep on hating on AI.

I don’t think Silicon Valley fully realizes just how much most Americans despise AI. I also don’t think Silicon Valley has seriously considered how to respond to this backlash.

We’ll discuss this article in three parts:

1. A Brief History of Technological Skepticism

2. Why Is AI So Hated?

3. How to Fix AI’s PR Problem

Without further ado, let’s begin.

A Brief History of Technological Skepticism

Technology has always had its skeptics. Even the seemingly mundane art of writing was once criticized: Socrates, in Plato’s *Phaedrus*, argued that the invention of writing would “implant forgetfulness in the souls” of men, weakening their memory. He wasn’t entirely wrong, but he was clearly being alarmist. The shift from oral memory to writing allowed humans to construct more complex, advanced ideas and, consequently, more complex, advanced societies. Of course, sometimes writing actually prevents forgetting (e.g., a shopping list). And, ironically, we only know Socrates’ views because Plato *wrote* them down. Funny how that works.

By the 1500s, with the advent of the printing press, Swiss scientist Conrad Gessner warned that information overload would be “confusing and harmful” to the human mind. Two hundred years later, with the rise of newspapers, a French politician suggested that newspapers would isolate readers and destroy the uplifting experience of collectively receiving news from the church pulpit. While I’ve never heard news from a pulpit, I can confidently say: I prefer reading *The New York Times* with my coffee.

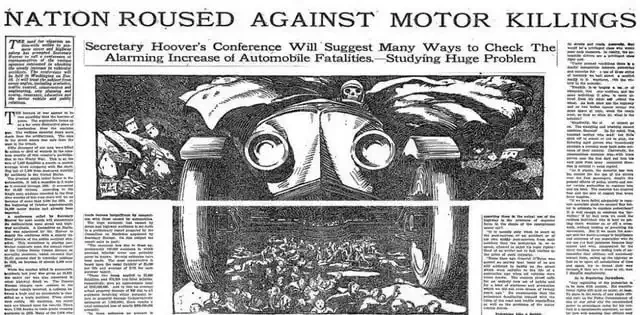

Fast forward to the 1900s, and cars became a target. Speaking of *The New York Times*: the paper once ran a headline titled “Nation Rages Against Motor Killings” (you can still see it). A widely circulated statistic at the time claimed that in the first four years after World War I, more Americans died in car accidents than were killed on the battlefields of France.

1924 *New York Times* headline: “Nation Rages Against Motor Killings.”

I’m inclined to think people were actually right on this one: our children looking back at history will likely be incredulous that we ever put ourselves into 4,000-pound death machines and hurled them down roads at high speeds. But the anxiety was moot by then: the genie was out of the bottle, and there was no putting it back.

There are many similar stories. The phonograph was accused of robbing live, humanly emotional performances of their vitality; critics believed recorded music would kill amateur musicians and ruin musical taste. (Hard to imagine what those critics would say about suno.ai.) Meanwhile, television is perhaps one of the most famously controversial technologies. Its nicknames were even “idiot box” and “boob tube.” Critics argued TV would destroy community, shorten attention spans, and encourage violence. It probably did all three.

1948, a boy’s reaction upon seeing television for the first time.

Entering this century, the internet and social media also faced backlash, some of it justified, some not. The pace of technological progress has been steady and predictable, and so has humanity’s backlash to innovation. There’s a long-standing tradition: fearing the things we create.

Frankenstein’s monster is perhaps the best metaphor for humanity’s fear of its own creations.

Of course, every new technology brings both benefits and harms; technology itself is a mirror of society. As Marshall McLuhan said, “We shape our tools, and thereafter our tools shape us.”

And that brings us to AI—the most hated technology in my lifetime.

Why Is AI So Hated?

AI’s backlash follows some of the historical patterns above, but I think the sentiment towards AI has moved beyond skepticism to hostility. I see several reasons:

AI arrived at a terrible moment for the tech industry’s public image.

Entering the 2010s, the tech industry was cool. Everyone wanted to work at Google or Facebook, playing ping-pong after free lunches. There was even a 2013 movie about Vince Vaughn and Owen Wilson interning at Google. That same year, Sheryl Sandberg published *Lean In*. Marissa Mayer was reviving Yahoo, Apple’s spaceship campus was being built, and WeWork was a fast-growing real estate tech company. The vibe was good.

A decade later, when ChatGPT arrived, public sentiment towards tech had shifted. Facebook had weathered the Cambridge Analytica scandal, new research revealed Instagram’s impact on mental health, and too many people had lost money on meme coins and expensive JPEGs. The vibe had soured.

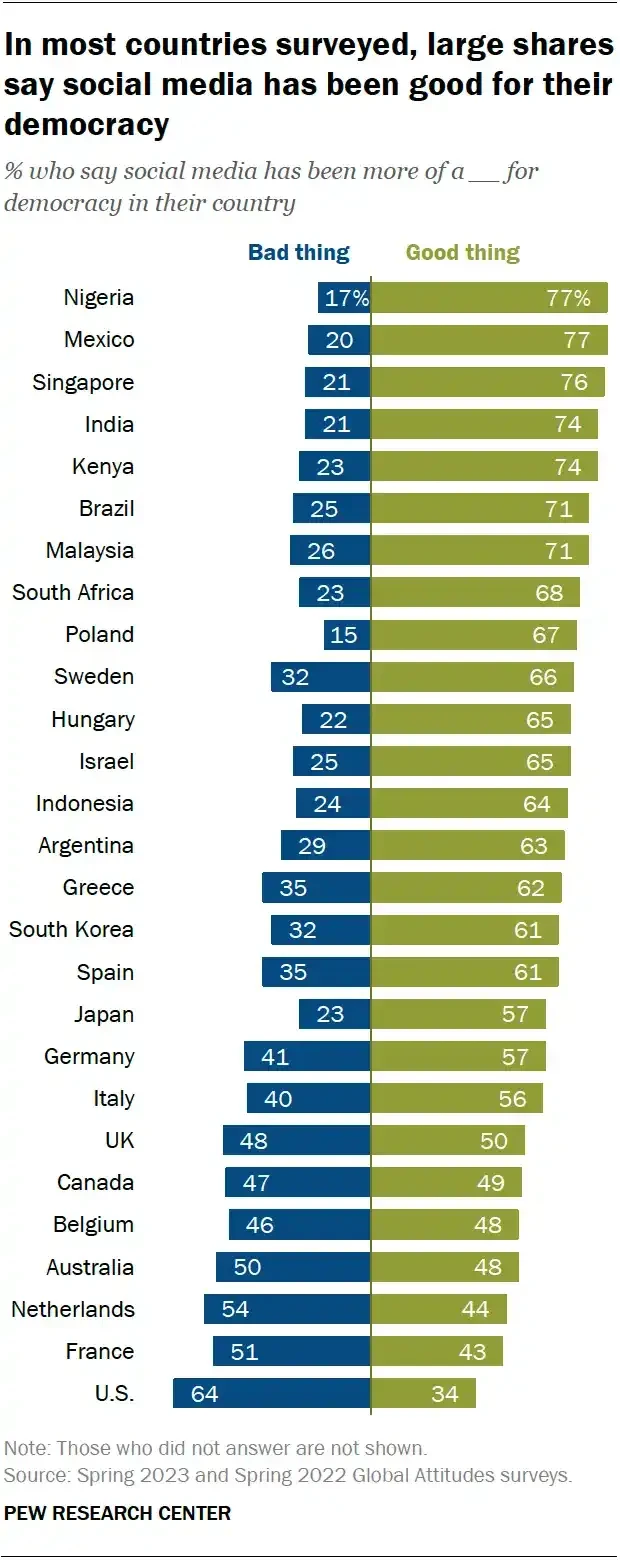

Some research shows that people’s views on AI are highly correlated with their views on social media. At ChatGPT’s launch, countries with more positive views of social media were also more receptive to AI. And those that saw social media as the greatest threat to democracy…

Simply put: AI’s timing was bad. People already distrusted tech companies.

Job fear is real, and it’s arriving during a time when people feel bad about the economy.

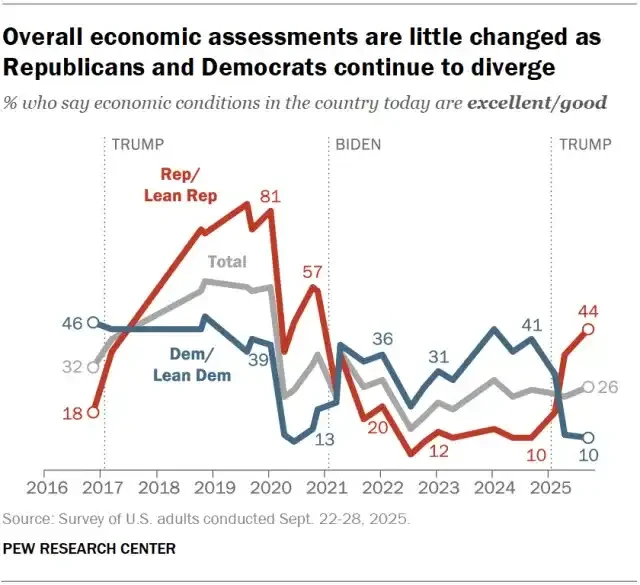

AI also arrived in a tough economic environment. ChatGPT launched in November 2022, when most Americans felt bad about the economy.

People aren’t eagerly anticipating a disruptive technology that might take their jobs. When people hear words like “copilot” and “augmentation,” they think: layoffs. Again, AI’s timing is not great.

The creative industry shapes culture, and AI poses a unique threat to creative work.

Some of the sharpest AI criticism comes from the creative industry. You can see it on TikTok.

Last year, Adrien Brody won an Oscar for *The Brutalist*, but filmmakers later revealed they used AI to improve Brody’s Hungarian accent in the film, a fact TikTok users still criticize. Taylor Swift faced backlash for using AI-generated videos to promote *The Life of a Showgirl*. In an episode of *The Studio* (a fantastic show), an angry viewer yells at Seth Rogen’s studio executive character for using AI in a Kool-Aid movie, and Ice Cube even shouts, “F*ck AI!”

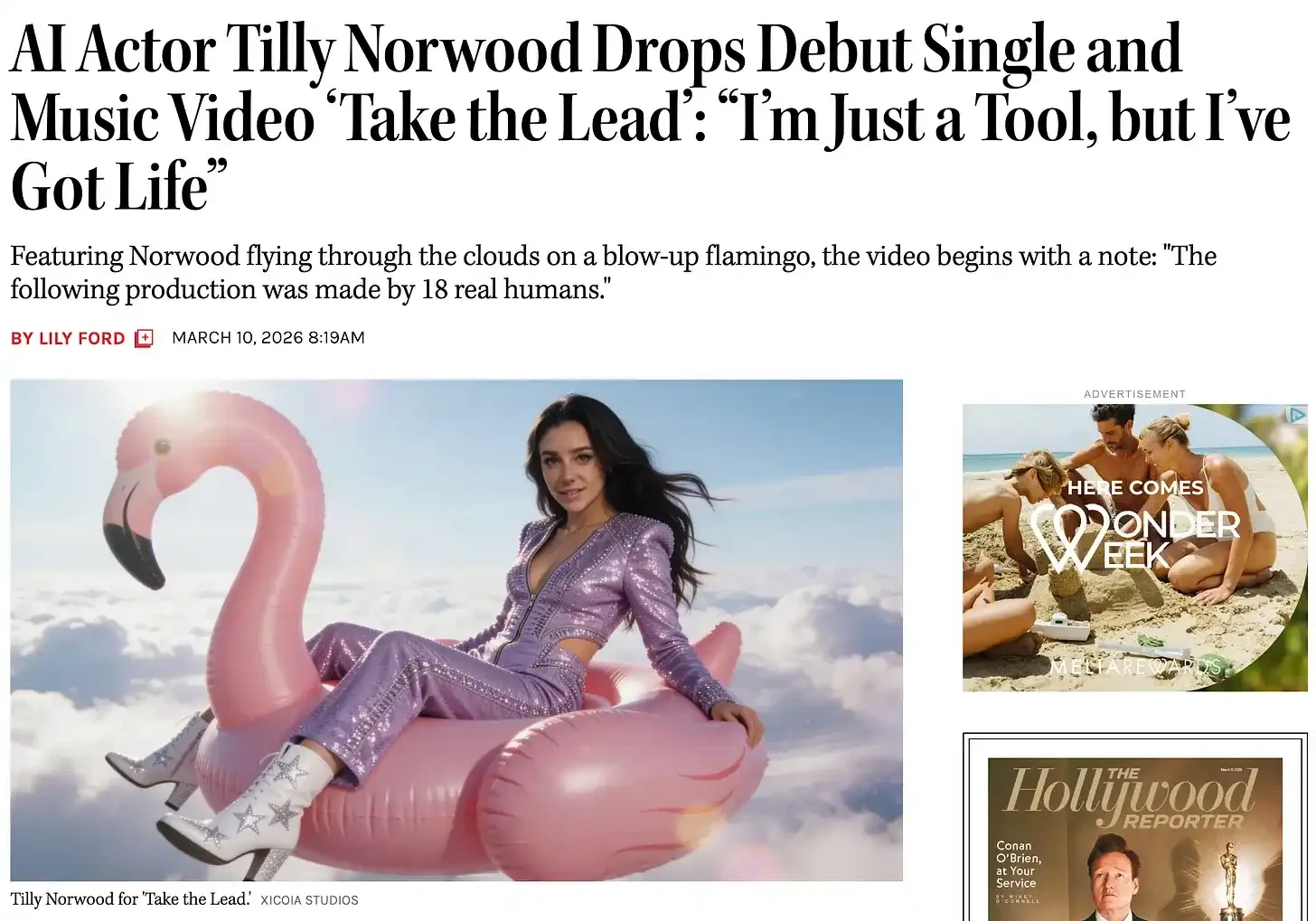

And of course, there was the 2023 SAG-AFTRA strike—the longest in Hollywood history—after which we even started seeing AI actors like Tilly Norwood. A real headline from *The Hollywood Reporter* yesterday was:

Creative workers are the ones who shape culture and public opinion. If AI is seen as an existential threat to creative work, its impact ripples throughout the culture.

AI is inauthentic, and the current cultural trend is all about authenticity. AI is online, and offline is now in vogue.

Vinyl record sales are at a 30-year high, Gen Z is buying film cameras, and flip phones (so-called “dumb phones”) are making a comeback. There’s a cultural trend towards the analog, the human, the tactile. And AI is synthetic. The nostalgia wave is partly a reaction to AI mania, but it started well before transformer models. Offline living is cool now, and AI is the most “online” thing there is. When people crave authenticity, a technology that is by definition “fake” is naturally at a disadvantage.

AI is seen as an attack on identity.

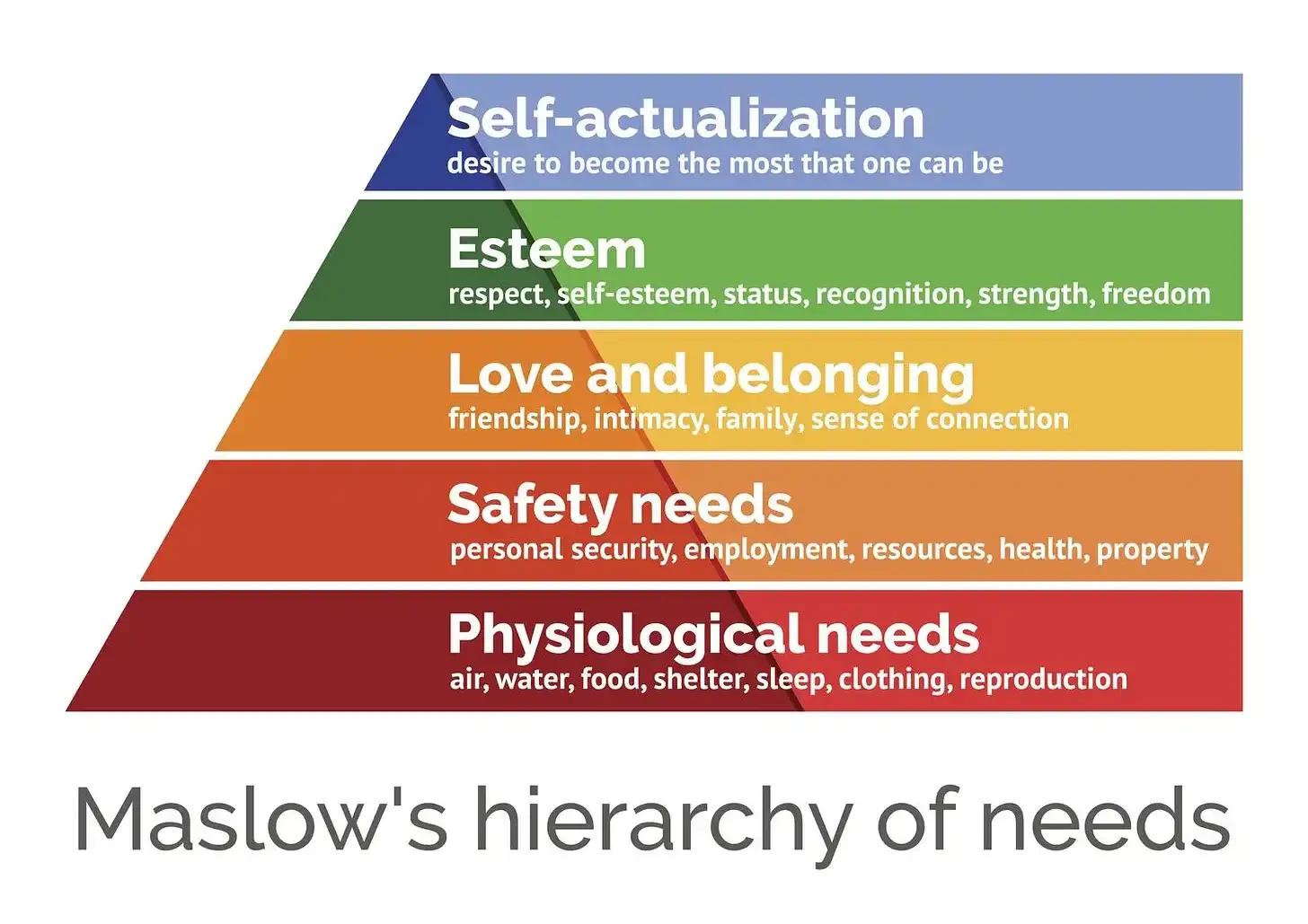

The fifth reason is the most nebulous but possibly the most important. AI makes people feel inferior to machines at the things they’re most proud of. What does that mean? Look at Maslow’s hierarchy of needs: AI is attacking the top of the pyramid.

Past waves of automation typically happened at the pyramid’s base. For example, steam engines and assembly lines replaced physical labor (the physiological work of survival). Early software automated clerical and administrative work. People did feel replaced, but the automation didn’t encroach on areas people considered their highest value.

AI is climbing to the top of the pyramid and starting to dismantle it. Many people define themselves by their creativity—writing, painting, music. Many also take pride in being good at their jobs—programming, legal work, customer service. AI is invading these identity domains, and it’s happening very fast. If a graphic designer’s identity is built on creating beautiful animations, and Midjourney can generate a “better” image in seconds… that’s hard to swallow.

I think one TikTok comment sums it up well.

I want AI to do the chores I don’t want to do, not the hobbies I want to do.

The angry AI critics on TikTok are often knowledge workers, people at the top of the educational and economic pyramid who thought they were immune to technological displacement. AI is threatening the most privileged, which almost inverts the history of technological progress.

How to Fix AI’s PR Problem

Most technological backlash stems from an instinctive fear of the new. But AI’s backlash feels like a confluence of factors: broken trust, economic anxiety, and a cultural mood primed to reject any new technology, let alone one that touches such deep human domains. But the genie is out of the bottle, and AI does have many amazing applications; I’m a firm AI believer myself. So, how do we fix this PR problem?

Start at the Bottom of the Pyramid

AI’s most compelling applications are actually the life-saving ones. For example: AI can detect cancer earlier than any radiologist. Such applications directly address the most fundamental human need (staying alive) and should be emphasized more.

Tell Stories with “Pain Points,” Not “Capabilities”

Some companies we’ve invested in at Daybreak have quietly switched their .ai domains back to .com. Founders need to be very careful when communicating AI to customers. They should lead with the problem they’re solving. A nurse doesn’t care if they’re using Opus or Sonnet; they care if the product lets them finish paperwork faster. Most tech industry launches emphasize what AI *can do* (model capabilities), not what problems AI solves for ordinary people. The narrative should shift from “this model has 1 trillion parameters” to “this product eliminates 4 hours of repetitive work.”

Change the Messengers—Stop Letting VCs Talk

Maybe this is my cue to end this article. Nobody wants to hear VCs talk. The loudest pro-AI voices come from tech CEOs and venture capitalists, two groups the American public trusts the least. If I were in charge of an AI marketing campaign, I’d have real users make the ads: farmers, accountants, home healthcare workers. Even OpenAI or Anthropic would be more persuasive with a Super Bowl ad featuring real users than with vague inspirational montages (OpenAI) or subtle digs at competitors (Anthropic).

Acknowledge Labor Market Changes, Then Emphasize Retraining and New Jobs

Many founders and VCs love to cite data showing AI will create more jobs than it destroys. But that doesn’t matter to the person who lost their job. The term “Luddite” originates from 19th-century English textile workers who organized to destroy weaving machines in the 1810s.

Those textile workers probably also realized the new machines would ultimately make society better; but they also knew the machines would make their own lives worse in the present. The right response to a massive labor market shock is to acknowledge the shock, then actually push funding and programs to retrain workers.

Make Humans More Visible in AI Products

If I were Pixar, I’d run a contest: see who in the world can make the best animated short using AI tools. In such an exercise, technology levels the playing field: anyone with a good story can create something beautiful from their living room. The artist is still at the center. If we had more projects like this, people would better understand how AI can amplify human creativity and be an equalizing tool. Just a thought.

Conclusion

Last month, Trump’s State of the Union address became the longest in history, 20 minutes longer than Clinton’s in 2000. But in nearly two hours of speaking, Trump mentioned AI only three times.

Obviously, a lot is happening in the world; we’re at an incredibly fragile geopolitical moment (I highly recommend Ray Dalio’s writing on the breakdown of world order). But at the same time, we’re also in the early stages of perhaps the biggest technological shift of this generation, maybe ever. Mentioning AI only three times in a two-hour speech shows we’re still very early.

Billions of people globally have still never used AI. In the US, many people even pride themselves on never having used AI.

This is clearly unsustainable. AI adoption is coming fast, and it’s colliding head-on with the strongest anti-tech sentiment in a century (maybe ever).

Silicon Valley confidently believes AI will win out in the end; of course it will. Technology always wins. But that confidence also makes them appear arrogant in the face of a skeptical public, leaving a trail of resentment that could ultimately hurt Silicon Valley. The coolest thing about Silicon Valley is its long history of building technology for billions of people. But it becomes very hard to do that when billions of people think you’re the villain.

This article is sourced from the internet: Why Does Everyone Hate AI?

[Hong Kong, February 28, 2026] — Asseto, a leading institutional-grade RWA tokenization platform in Asia, announced today that its two RWA business plans providing comprehensive solutions for Delin Holdings (1709.HK) have received no-objection letters from the Hong Kong Securities and Futures Commission (SFC) on February 24, 2026. As the comprehensive solution provider for both plans, Asseto’s capabilities in handling complex asset tokenization and the compliant application of cutting-edge blockchain technology have been validated, further solidifying its market position as a core technical service provider in the Asian RWA sector. Regulatory Validation and Industry Leadership: Asseto Paves Compliant Tokenization Paths for Two Key RWA Types Based on the full-stack comprehensive tokenization solution provided by Asseto, this initiative has achieved industry leadership in two critical dimensions and received SFC no-objection letters, validating…