How does io.net build a decentralized computing power platform?

background

With the launch of GPT 4 LLM by OpenAI, the potential of various AI Text-to-Image models has been witnessed. With the increasing number of applications based on mature AI models, the demand for computing resources such as GPUs has risen sharply.

GPU Utils A 2023 article exploring the supply and demand of Nvidia H 100 GPUs pointed out that large companies involved in the AI business have a strong demand for GPUs, and technology giants such as Meta, Tesla, and Google have purchased a large number of Nvidia GPUs for building AI-oriented data centers. Meta has about 21,000 A 100 GPUs, Tesla has about 7,000 A 100s, and Googles data centers have also invested heavily in GPUs, although specific numbers were not provided. Driven by the need to train large language models (LLMs) and other AI applications, the demand for GPUs, especially H 100s, continues to grow.

At the same time, according to data from Statista, the AI market size has grown from 134.8 Billion in 2022 to 241.8 Billion in 2023, and is expected to reach 738.7 Billion in 2030. The market value of cloud services has also increased by about 14% from 633 Billion, part of which is attributed to the rapid growth in demand for GPU computing power in the AI market.

From what perspective can we deconstruct and explore investment opportunities in the rapidly growing AI market with huge potential? Based on a report by IBM, we summarized the infrastructure required for creating and deploying artificial intelligence applications and solutions. It can be said that AI infrastructure exists mainly to process and optimize the large amount of data sets and computing resources that training models rely on, solving the problems of data set processing efficiency, model reliability, and application scalability from both hardware and software aspects.

AI training models and applications require a large amount of computing resources, preferring low-latency cloud environments and GPU computing power, and also including distributed computing platforms (Apache Spark/Hadoop) in the software stack. Spark distributes the workflows that need to be processed to various large computing clusters, and has built-in parallel mechanisms and fault-tolerant designs. The decentralized design of blockchain makes distributed nodes the norm, and the POW consensus mechanism created by BTC establishes that miners need to compete for computing power (workload) to win block results, which has a similar workflow to AI, which also requires computing power to generate models/inference problems. As a result, traditional cloud server manufacturers began to expand new business models, renting graphics cards and selling computing power like renting servers. And imitating the idea of blockchain, AI computing power adopts a distributed system design, which can utilize idle GPU resources and reduce the computing power costs of startups.

Introduction to IO.NET Project

Io.net is a distributed computing provider combined with the Solana blockchain, aiming to use distributed computing resources (GPU CPU) to solve the computing demand challenges in the field of AI and machine learning. IO integrates idle graphics cards from independent data centers and cryptocurrency miners, and unites crypto projects such as Filecoin/Render to bring together the resources of more than 1 million GPUs to solve the problem of AI computing resource shortage.

On the technical level, io.net is built on ray.io, a machine learning framework for distributed computing, and provides AI applications with distributed computing resources that require computing power, from reinforcement learning, deep learning to model tuning, model operation, and other aspects. Anyone can join ios computing network as a worker or developer without additional permission. At the same time, the network will adjust the computing power price according to the complexity, urgency, and supply of computing power resources of the computing work, and price according to market dynamics. Based on the characteristics of distributed computing power, ios backend will also match GPU providers with developers based on the type of GPU demand, current availability, and the location and reputation of the requester.

$IO is the native token of the io.net system, acting as a transaction medium between computing power providers and computing power service buyers. Using $IO can reduce 2% of the order handling fee compared to $USDC. At the same time, $IO also plays an important incentive role in ensuring the normal operation of the network: $IO token holders can pledge a certain amount of $IO to nodes, and node operation also requires $IO tokens to be pledged in order to obtain the corresponding income during the machines idle period.

The current market cap of $IO tokens is approximately $360 million and the FDV is approximately $3 billion.

$IO Token Economics

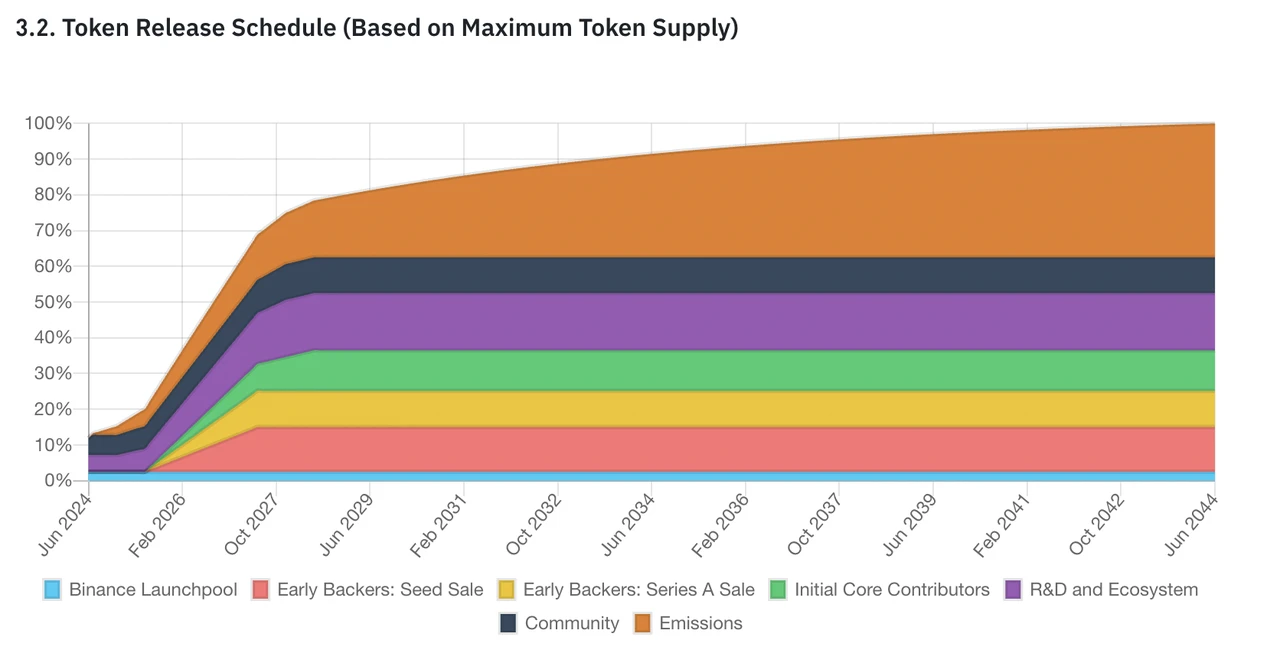

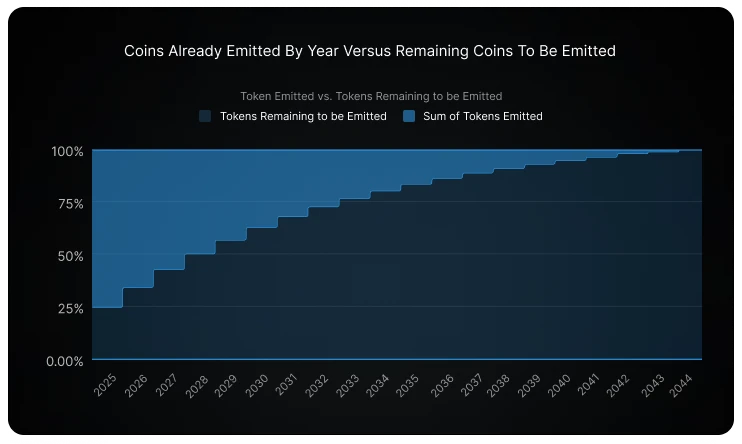

The maximum total supply of $IO is 800 million, of which 500 million were distributed to all parties at the token TGE, and the remaining 300 million tokens will be released gradually over 20 years (the release amount decreases by 1.02% per month, about 12% per year). The current circulation of IO is 95 million, of which 75 million were unlocked for ecological research and community building at TGE and 20 million mining rewards from Binance Launchpool.

The rewards for computing power providers during the IO testnet are distributed as follows:

-

Season 1 (as of April 25) – 17,500,000 IO

-

Season 2 (May 1 – May 31) – 7,500,000 IO

-

Season 3 (June 1 – June 30) – 5,000,000 IO

In addition to the testnet computing power rewards, IO also gave some airdrops to creators who participated in building the community:

-

(First Round) Community / Content Creators / Galxe / Discord – 7,500,000 IO

-

Season 3 (June 1 – June 30) Discord and Galxe participants – 2,500,000 IO

The first quarter testnet computing power rewards and the first round of community creation/Galxe rewards have been airdropped during TGE.

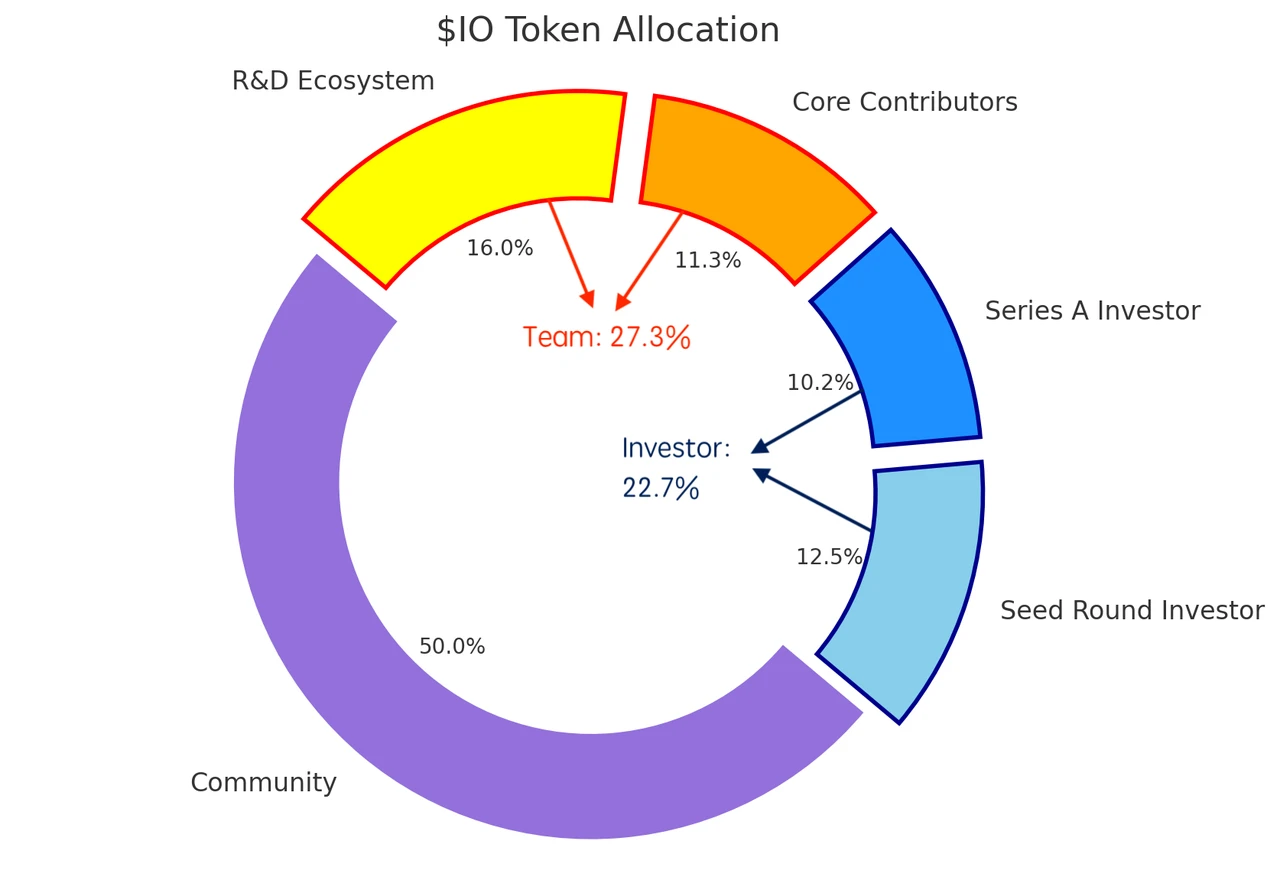

According to the official documentation, the overall allocation of $IO is as follows:

$IO Token Burning Mechanism

Io.net executes the repurchase and destruction of $IO tokens according to a fixed set of preset procedures. The specific repurchase and destruction amount depends on the $IO price at the time of execution. The funds used to repurchase $IO come from the operating income of IOG (The Internet of GPUs), and a 0.25% order booking fee is charged from the computing power buyers and computing power providers in IOG, as well as a 2% handling fee for purchasing computing power with $USDC.

Competitive product analysis

Projects similar to io.net include Akash, Nosana, OctaSpace, Clore.AI, and other decentralized computing power markets that focus on solving the computing needs of AI models.

-

Akash Network uses a decentralized market model to utilize idle distributed computing resources, aggregate and rent out excess computing power, and respond to supply and demand imbalances through dynamic discounts and incentive mechanisms, and achieve efficient and trustless resource allocation based on smart contracts, thereby providing secure, cost-effective and decentralized cloud computing services. It allows Ethereum miners and other users with underutilized GPU resources to rent these resources, thereby creating a cloud service market. In this market, service pricing is carried out through a reverse auction mechanism, where buyers can bid to rent these resources, driving price competitiveness down.

-

Nosana is a decentralized computing power market project in the Solana ecosystem. Its main purpose is to use idle computing power resources to form a GPU grid to meet the computing needs of AI reasoning. The project defines the operation of its computing power market through programs on Solana and ensures that the GPU nodes participating in the network complete their tasks reasonably. Currently, in addition to the second phase of the test network operation, it provides computing power services for the LLama 2 and Stable Diffusion model reasoning process.

-

OctaSpace is an open source and scalable distributed computing cloud node infrastructure that allows access to distributed computing, data storage, services, VPN, etc. OctaSpace includes CPU and GPU computing power, disk space for ML tasks, AI tools, image processing, and rendering scenes using Blender. OctaSpace was launched in 2022 and runs on its own Layer 1 EVM-compatible blockchain. The blockchain uses a dual-chain system that combines the Proof of Work (PoW) and Proof of Authority (PoA) consensus mechanisms.

-

Clore.AI is a distributed GPU supercomputing platform that allows users to obtain high-end GPU computing resources from nodes that provide computing power around the world. It supports multiple uses such as AI training, cryptocurrency mining, and movie rendering. The platform provides low-cost, high-performance GPU services, and users can get Clore token rewards by renting GPUs. Clore.ai focuses on security, complies with European laws, and provides a powerful API for seamless integration. In terms of project quality, Clore.AIs webpage is relatively rough, and there is no detailed technical documentation to verify the authenticity of the projects self-introduction and the authenticity of the data. We remain skeptical about the graphics card resources and the real level of participation in the project.

Compared with other products in the decentralized computing market, io.net is currently the only project that anyone can join to provide computing resources without any admission. Users can use a minimum 30-series consumer-grade GPU to participate in the networks computing contribution, and there are also Apple chip resources such as Macbook M 2 and Mac Mini. More sufficient GPU and CPU resources and rich API construction enable IO to support various AI computing needs, such as batch reasoning, parallel training, hyperparameter tuning, and reinforcement learning. Its backend infrastructure is composed of a series of modular layers that enable effective resource management and automated pricing. Other distributed computing market projects are mostly for enterprise-oriented graphics card resources cooperation, and there is a certain threshold for user participation. Therefore, IO may have the ability to use the encrypted flywheel of token economics to leverage more graphics card resources.

Below is the current market value/FDV comparison of io.net and its competitors:

Review and Conclusion

$IOs listing on Binance can be said to be a well-deserved ending to this heavyweight project that has attracted much attention since its opening, and the popularity of the test network, as well as the fact that it has been gradually attacked by many people during the delay of actual testing and questioned the opaque points rules. The token was launched during the market correction, opened low and ended high, and finally returned to a relatively rational valuation range. However, for the test network participants who came because of io.nets strong investment lineup, some were happy and some were sad. Most users who rented GPUs but did not insist on participating in each season of the test network did not get the ideal excess returns as expected, but faced the reality of reverse profit. During the test network, io.net divided the prize pool of each period into two pools, GPU and high-performance CPU, and calculated them separately. The announcement of points in season 1 was postponed due to a hacker incident, but the point exchange ratio of the GPU pool was determined to be nearly 90:1 at the final TGE. The cost of users who rented GPUs from major cloud platform manufacturers far exceeded the airdrop income. During Season 2, the official fully implemented the PoW verification mechanism. Nearly 30,000 GPU devices successfully participated and passed the PoW verification. The final points redemption ratio was 100:1.

After the much-anticipated start, whether io.net can achieve its claimed goal of providing computing needs for various links of AI applications, and how much real demand will remain after the test network, perhaps only time can give the best proof.

refer to:

https://blockcrunch.substack.com/p/rndr-akt-ionet-the-complete-guide

https://www.odaily.news/post/5194118

https://www.theblockbeats.info/news/53690

https://www.binance.com/en/research/projects/ionet

https://www.ibm.com/topics/ai-infrastructure

https://gpus.llm-utils.org/nvidia-h100-gpus-supply-and-demand/

https://www.statista.com/statistics/941835/artificial-intelligence-market-size-revenue-comparisons/

https://www.grandviewresearch.com/press-release/global-cloud-ai-market

This article is sourced from the internet: How does io.net build a decentralized computing power platform?

Original article by: BRIDGET HARRIS Original translation: TechFlow Chain abstraction has become a hot topic right now, and it’s easy to see why — all of us in the crypto space should be excited about tools that make on-chain participation easier for consumers. But a lot of the discussion doesn’t focus on how we got here, and I think it starts with the fact that developers are consumers too . And right now, they’re forced to choose between different ecosystems, technology stacks, and communities. This creates a lock-in that sometimes deflects developers from focusing on the right problems because of perverse and unsustainable incentives. Developers are users too, and they shouldn’t be forced to choose where to build. A core challenge for developers is trying to integrate their applications with…