Stargate Project’s Major Pivot: OpenAI Abandons Building to Rent, the $1.4 Trillion Computing Power Empire Dream, Awakens

According to a March 16th report by The Information, OpenAI has undergone a major restructuring of its Stargate computing infrastructure project, abandoning plans to build its own data centers and fully shifting towards leasing computing power from cloud service providers like Microsoft Azure, Oracle, and Amazon AWS. Stargate has been split into three functional teams, all managed under former Intel Chief Technology and AI Officer Sachin Katti.

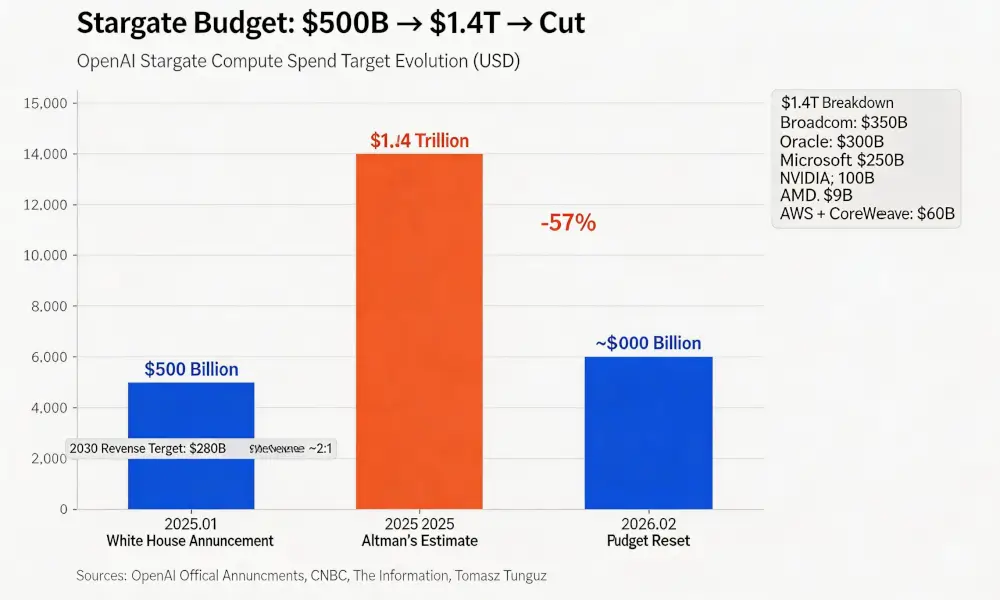

The direct reason for the shift is not complicated. Stargate was announced with great fanfare at the White House in January 2025, declaring a joint venture with SoftBank and Oracle to build large-scale data centers, with an initial investment of $100 billion and a total investment of $500 billion over four years. However, more than a year after the project’s launch, not a single employee has been hired, and no substantial development of a data center has occurred. According to CNBC, lenders were unwilling to provide billions in construction financing to a company still reporting massive operating losses. OpenAI also withdrew earlier this month from negotiations to expand the Oracle Stargate facility in Abilene, Texas.

Over a year, zero employees, zero ground broken. Stargate’s “build-it-ourselves” path never truly started.

According to the breakdown data shown in investor materials, the $1.4 trillion total commitment in Altman’s presentation was distributed among seven suppliers. Based on venture analyst Tomasz Tunguz’s analysis of the investor materials, Broadcom accounted for $350 billion, Oracle $300 billion, Microsoft $250 billion, NVIDIA $100 billion, AMD $90 billion, with AWS and CoreWeave together totaling $60 billion.

In February 2026, according to CNBC, this figure was reset to approximately $600 billion (by 2030), a cut of 57%. The same report gave a slightly different but directionally consistent number, with OpenAI expecting to spend $665 billion on cloud servers by 2030.

$600 billion is still a number that requires an anchor to grasp. According to internal OpenAI projections, the company’s revenue target for 2030 is $280 billion, meaning the cumulative five-year spending-to-revenue ratio is roughly 2:1. Meanwhile, based on internal financial data cited by ainvest, the company’s projected loss for 2026 is $14 billion, and its gross margin, as reported by multiple media outlets, is only 33% (Note: Gross margin reflects the profitability of the product itself, while net loss is the final result after deducting all costs including R&D and management; the two can coexist).

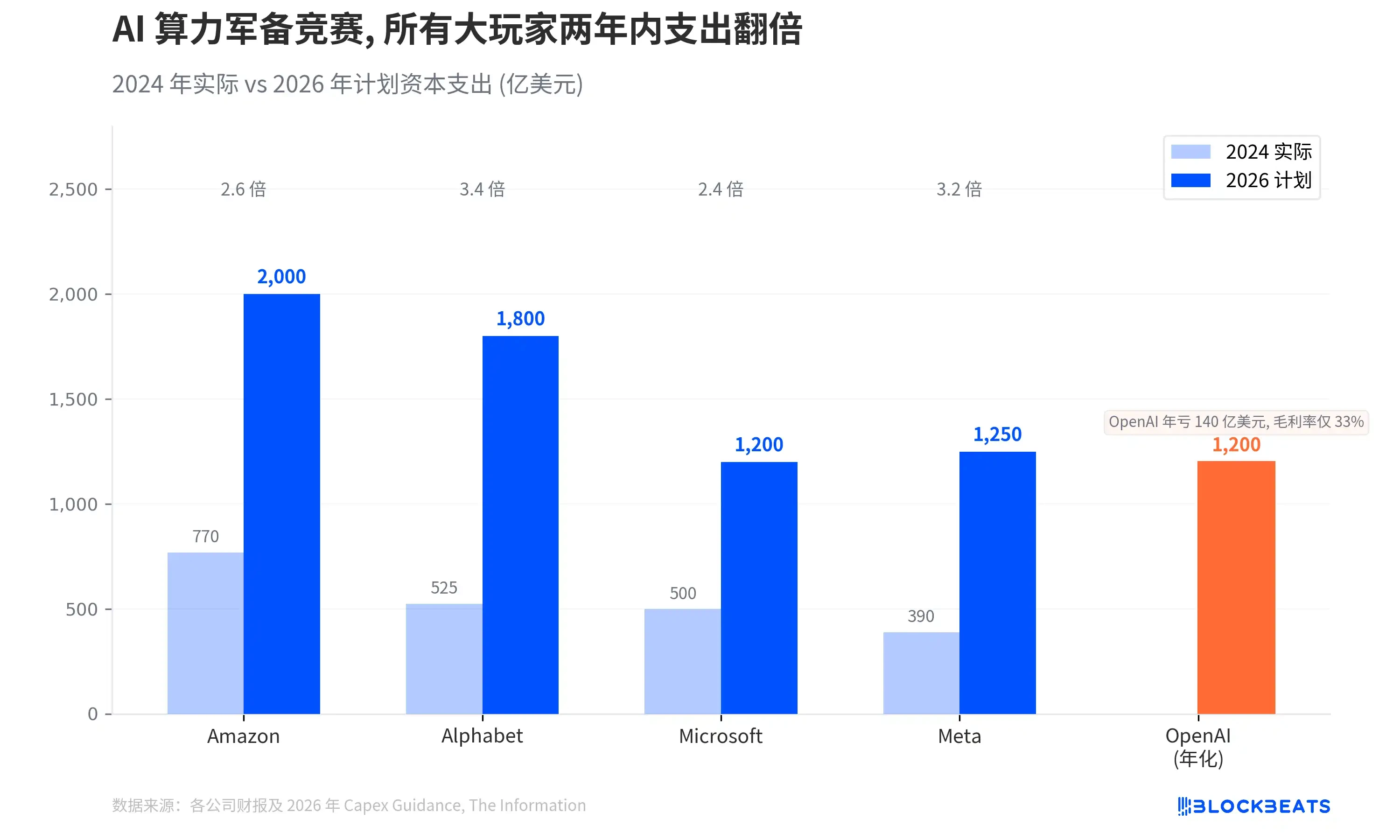

Placing OpenAI’s spending target within the panorama of the Big Tech computing power arms race makes the proportional relationships clearer.

According to company financial reports and public guidance, Amazon plans $200 billion in capital expenditures for 2026, Alphabet $180 billion, Meta $125 billion, and Microsoft approximately $120 billion. The expenditures of these four companies have generally doubled or tripled within two years, totaling over $650 billion, with about three-quarters flowing into AI infrastructure.

OpenAI’s $600 billion is a five-year cumulative target, annualizing to about $120 billion, which is comparable to Microsoft’s single-year capital expenditure. The difference is that Microsoft’s annual revenue exceeds $240 billion, while OpenAI’s annualized revenue has just reached $25 billion and is not expected to achieve cash flow positivity before 2030.

The Stargate restructuring is not just a change in budget numbers; the organizational adjustments reveal a deeper shift in direction.

The restructured Stargate is divided into three lines. The Epic Business Partnerships group is led by OpenAI veteran and former Deloitte manager Peter Hoeschele, responsible for managing cloud contracts with Microsoft, Oracle, and Amazon, as well as deals with chip manufacturers. These deals include a multi-year contract with AMD (using up to 6 gigawatts of chips, at a cost of up to 10% of AMD’s common stock) and an agreement with chip startup Cerebras Systems.

The Technical Engineering and Design group is co-led by former Meta and Google engineer Chris Malone and former Microsoft engineering executive Adrian Caulfield, responsible for redesigning the AI server clusters used by OpenAI. The Physical Facilities Operations group is led by former Google data center director Nick Saddock, replacing Keith Heyde who departed weeks ago.

The semiconductor team led by former Google chip executive Richard Ho is not under Katti’s purview and reports directly to OpenAI President Greg Brockman. This team is collaborating with Broadcom to develop in-house chips, with OpenAI hoping these chips will ultimately reduce the inference costs of running products like ChatGPT.

The name “Stargate” remains, but what it refers to has completely changed. In January 2025, it was a joint venture project to build data centers with SoftBank and Oracle. In March 2026, it is OpenAI’s broad strategy to bring gigawatt-scale server capacity online. It has shifted from “I want to build my own power plant” to “I want to sign the best leases.” The total planned capacity across all sites remains nearly 7 gigawatts, with a three-year investment total still exceeding $400 billion. OpenAI is shifting its computing power focus towards NVIDIA’s Vera Rubin platform, aiming to bring its first gigawatt-scale capacity online in the second half of 2026.

이 글은 인터넷에서 퍼왔습니다: Stargate Project’s Major Pivot: OpenAI Abandons Building to Rent, the $1.4 Trillion Computing Power Empire Dream, Awakens

Original Author: Oliva Moore, a16z Partner Original Compilation: Peggy, BlockBeats Editor’s Note: Three years ago, generative AI was still an experimental field for a few products; today, it has become a foundational capability in the software world. From the ongoing battle for the default AI, where ChatGPT still leads, to the evolution of video, music, and voice creative tools, and the emergence of Agent products and AI browsers, the form of AI is rapidly evolving from a “chat tool” into a new computing platform. By analyzing the traffic, user structure, and functional evolution of mainstream global AI products, this article reveals several key shifts: platform ecosystems are beginning to create lock-in effects, the global market is gradually diverging under policy and technological paths, the focus of creative tools is shifting…