Intelligent Computing Convergence: The Deep Integration Architecture, Paradigm Evolution, and Application Landscape of AI and the Cryptocurrency Industry

In the third decade of the 21st century, the convergence of Artificial Intelligence (AI) and Cryptocurrency (Crypto) is no longer merely the combination of two buzzwords; it represents a profound revolution in the technological paradigm. As the global 暗号currency market capitalization officially surpassed the $4 trillion milestone in 2025, the industry has completed its transition from an experimental niche market to a vital component of the modern economy.

One of the core driving forces behind this transformation is the deep integration of AI—an immensely powerful decision-making and processing layer—with blockchain, which serves as a transparent, immutable execution and settlement layer. This combination is addressing the respective pain points of both fields: AI is at a critical juncture in its evolution from centralized monopolies towards a decentralized, transparent era of “Open Intelligence.” Meanwhile, the crypto industry, having established a more robust infrastructure, urgently requires AI to solve problems such as complex on-chain interactions, security vulnerabilities, and insufficient application utility.

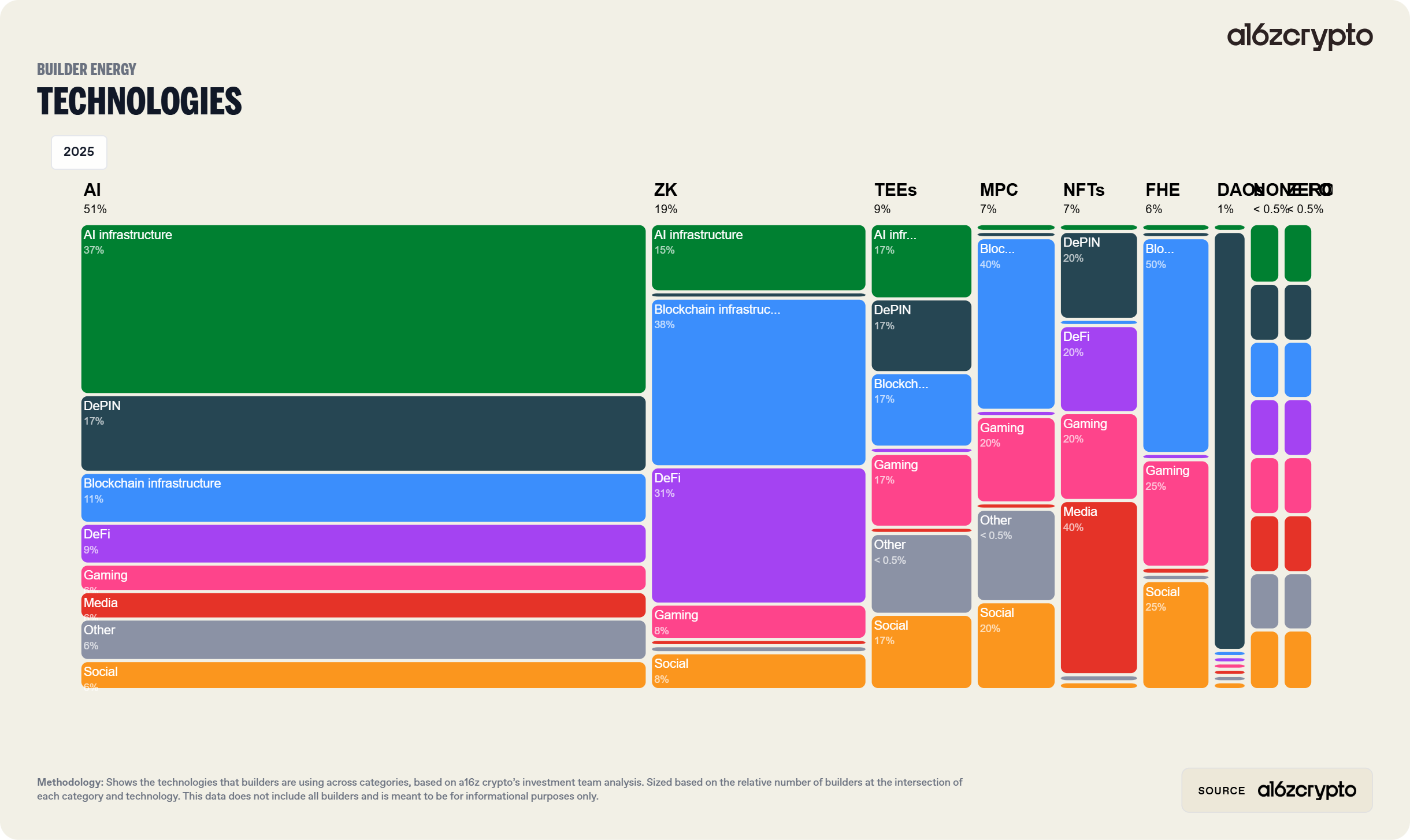

From the perspective of capital flows, the strategic divergence among top-tier venture capital firms confirms this trend. a16z Crypto completed its fifth fundraise of $2 billion in 2025, firmly establishing the intersection of AI and Crypto as its long-term strategic core, viewing blockchain as essential infrastructure to prevent AI censorship and control.

Simultaneously, firms like Paradigm are attempting to capture cross-industry benefits from this technological fusion by expanding their investment horizons into robotics and general AI. According to OECD data, by 2025, global VC investment in the AI sector accounted for 51% of total global investment. Within the Web3 space, the proportion of funding for AI-related projects is also steadily rising, reflecting the market’s high recognition of the “decentralized intelligence” narrative.

1. Infrastructure Reconfiguration: Decentralized Compute and Computational Integrity

There exists a natural contradiction between AI’s insatiable appetite for Graphics Processing Units (GPUs) and the fragility of the current global supply chain. Between 2024 and 2025, GPU shortages have become the norm, creating fertile ground for the explosion of Decentralized Physical Infrastructure Networks (DePIN).

1.1 The Dual Evolution of Decentralized Compute 市場s

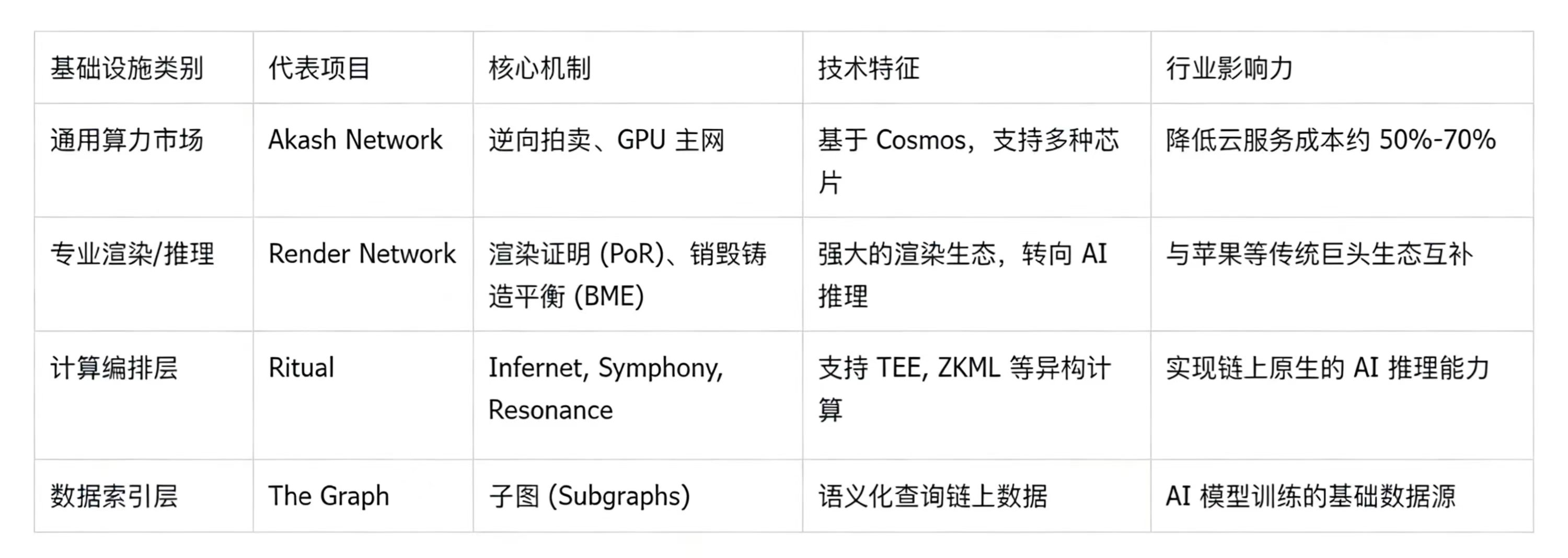

Current decentralized compute platforms are primarily divided into two major camps. The first is represented by Render Network (RNDR) and Akash Network (AKT), which aggregate idle GPU compute power globally by building decentralized two-sided markets. Render Network has become the benchmark for distributed GPU rendering, not only reducing costs for 3D creation but also supporting AI inference tasks through blockchain coordination, enabling creators to access high-performance compute at lower prices. Akash achieved a leap forward post-2023 with its GPU mainnet (Akash ML), allowing developers to rent high-spec chips for large-scale model training and inference.

The second camp is represented by new computational orchestration layers like Ritual. Ritual’s uniqueness lies in not attempting to directly replace existing cloud services, but rather serving as an open, modular sovereign execution layer that embeds AI models directly into the blockchain’s execution environment. Its Infernet product allows smart contracts to seamlessly call AI inference results, solving the long-standing technical bottleneck of “on-chain applications being unable to natively run AI.”

1.2 Computational Integrity and Breakthroughs in Verification Technology

In decentralized networks, verifying “whether computation has been executed correctly” is a core challenge. The technological progress in 2025 has focused mainly on the integrated application of Zero-Knowledge Machine Learning (ZKML) and Trusted Execution Environments (TEE).

The Ritual architecture, through its proof-system agnostic design, allows nodes to choose between TEE code execution or ZK proofs based on task requirements. This flexibility ensures that every inference result generated by an AI model is traceable, auditable, and guaranteed for integrity, even in highly decentralized environments.

2. Democratization of Intelligence: The Rise of Bittensor and Commoditized Markets

The emergence of Bittensor (TAO) marks the entry of AI and Crypto convergence into a new phase of “marketization of machine intelligence.” Unlike traditional single-purpose compute platforms, Bittensor aims to create an incentive mechanism that allows various machine learning models worldwide to connect, learn from each other, and compete for rewards.

2.1 Yuma Consensus: From Linguistics to Consensus Algorithm

The core of Bittensor is the Yuma Consensus (YC), a subjective utility consensus mechanism inspired by Gricean pragmatics.

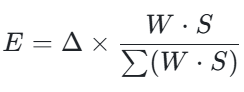

YC’s operational logic assumes that an efficient cooperator tends to output truthful, relevant, and informative answers, as this is the optimal strategy for obtaining the highest rewards within the incentive landscape. Technically, YC calculates token emissions through validators’ weighted evaluations of miners’ performance. Its core logic for allocating emission shares can be represented by the following LaTeX formula:

Where E is the emission reward, Δ is the daily total supply increment, W is the matrix of validator evaluation weights, and S is the corresponding staking weight. To prevent malicious collusion or bias, YC introduces a Clipping mechanism, which trims weight settings that exceed the consensus benchmark, ensuring the system’s robustness.

2.2 Subnet Economics and the Dynamic TAO Paradigm

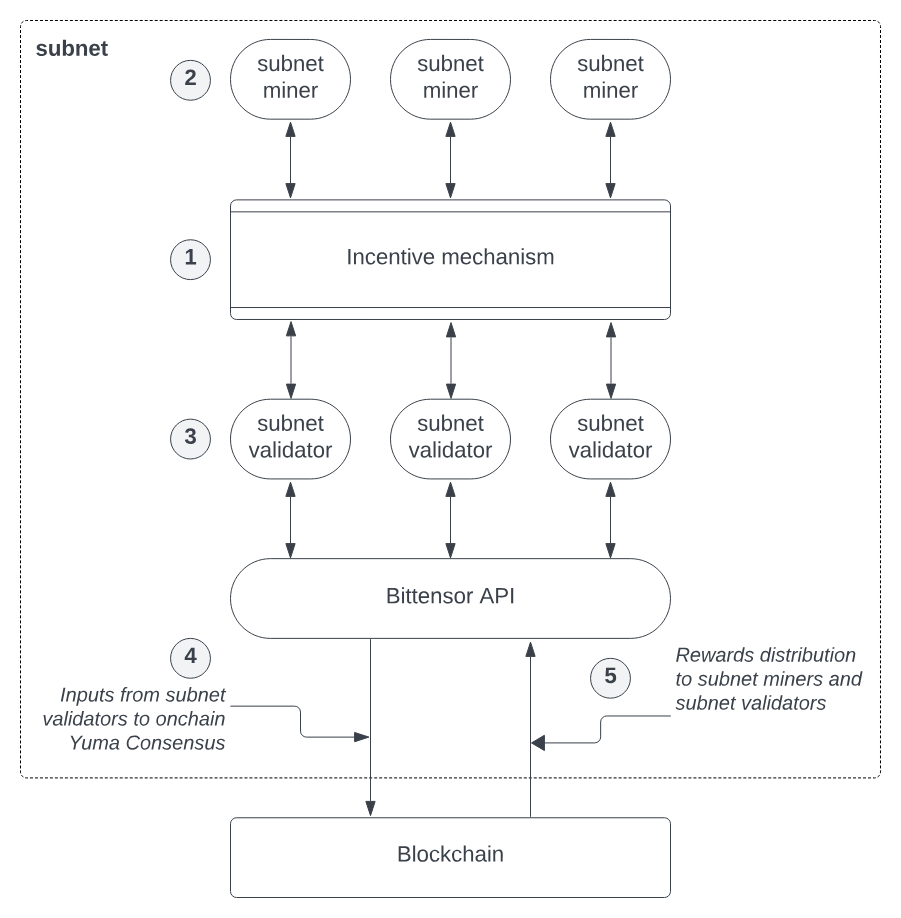

By 2025, Bittensor has evolved into a multi-layered architecture. The base layer is the Subtensor ledger managed by the Opentensor Foundation, while the upper layer consists of dozens of vertically specialized subnets, focusing on specific tasks like text generation, audio prediction, and image recognition.

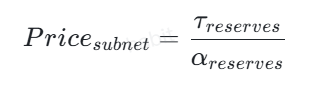

The introduced “Dynamic TAO” mechanism creates independent value reserve pools for each subnet via Automated Market Makers (AMMs), with their price determined by the ratio of TAO to Alpha tokens:

This mechanism enables automatic resource allocation: subnets with high demand and high-quality output will attract more staking, thereby receiving a larger proportion of daily TAO emissions. This competitive market structure is aptly compared to an “Olympics of Intelligence,” where inefficient models are naturally selected out.

3. The Rise of the Agent Economy: AI Agents as First-Class Citizens of Web3

During the 2024-2025 cycle, AI Agents are undergoing a fundamental metamorphosis from “assistive tools” to “native on-chain entities.” This evolution is reflected not only in the increasing complexity of their technical architecture but, more importantly, in their radically expanded roles and permissions within the Decentralized Finance (DeFi) ecosystem.

The following is an in-depth analysis of this trend:

3.1 Agent Architecture: The Closed Loop from Data to Execution

Current on-chain AI agents are no longer simple scripts but mature systems built upon complex three-layer logic:

- Data Input Layer: Agents fetch real-time on-chain data such as liquidity pools and trading volumes via blockchain nodes or APIs (like Ethers.js), and incorporate off-chain information like social media sentiment and centralized exchange prices through oracles (e.g., Chainlink).

- AI/ML Decision Layer: Agents utilize Long Short-Term Memory networks (LSTM) to analyze price trends, or employ Reinforcement Learning to iteratively optimize strategies in complex market games. The integration of Large Language Models (LLMs) also endows agents with the ability to understand ambiguous human intent.

- Blockchain Interaction Layer: This is the key to achieving “financial autonomy.” Agents can now manage non-custodial wallets, automatically calculate optimal gas fees, handle nonces, and even integrate MEV protection tools (like Jito Labs) to prevent front-running in transactions.

3.2 Financial Rails and Agent-to-Agent Transactions

a16z’s 2025 report specifically highlighted the financial backbone for AI agents—protocols like x402 and similar micropayment standards. These standards allow agents to pay for API fees or purchase services from other agents without human intervention. For example, the Olas (formerly Autonolas) ecosystem already processes over 2 million automated transactions between agents monthly, covering tasks from DeFi swaps to content creation.

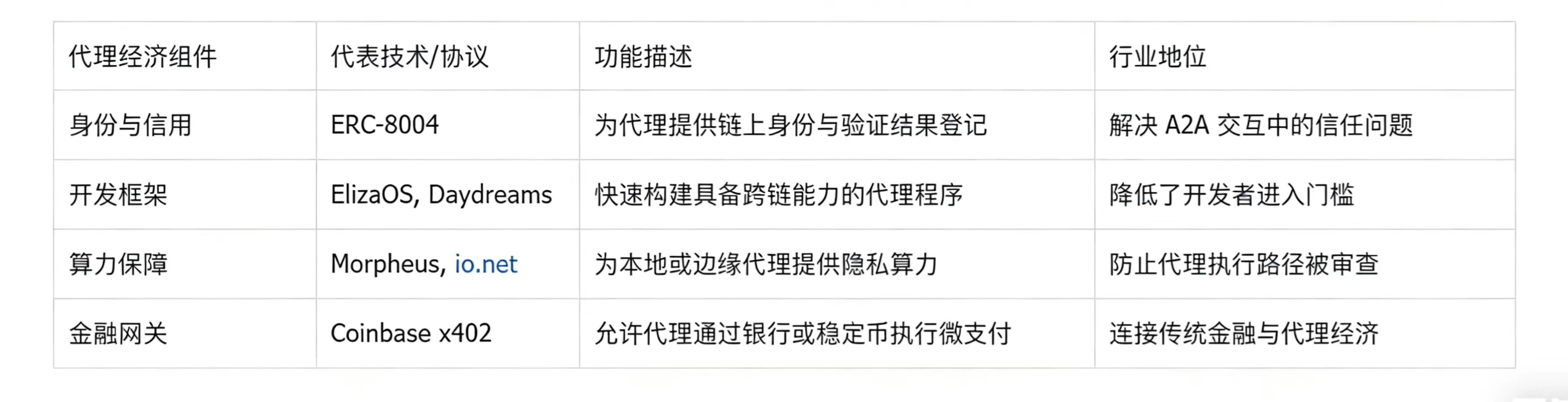

Agent Economy Components

This trend is tangibly reflected in market data. In terms of growth rate, the AI agent market is on the cusp of an explosion. According to MarketsandMarkets research, the global AI agent market is projected to grow from $7.84 billion in 2025 to $52.62 billion by 2030, with a Compound Annual Growth Rate (CAGR) of 46.3%. Furthermore, Grand View Research provides a similar long-term forecast, estimating the market size to reach $50.31 billion by 2030.

Simultaneously, standardized tools at the development layer are beginning to take shape. The ElizaOS framework, championed by a16z, has become the infrastructure for the AI agent space, akin to “Next.js” in front-end development. It allows developers to easily deploy AI agents with full financial capabilities on mainstream social platforms like X, Discord, and Telegram. As of early 2025, the total market capitalization of Web3 projects built on this framework has exceeded $20 billion.

4. Privacy Computing and Confidentiality: The Contest Between FHE, TEE, and ZKML

Privacy is one of the most challenging issues in the convergence of AI and Crypto. When enterprises run AI strategies on public blockchains, they neither want to leak private data nor expose their core model parameters. Currently, the industry has formed three main technological paths: Fully Homomorphic Encryption (FHE), Trusted Execution Environments (TEE), and Zero-Knowledge Machine Learning (ZKML).

4.1 Zama and the Industrialization Journey of FHE

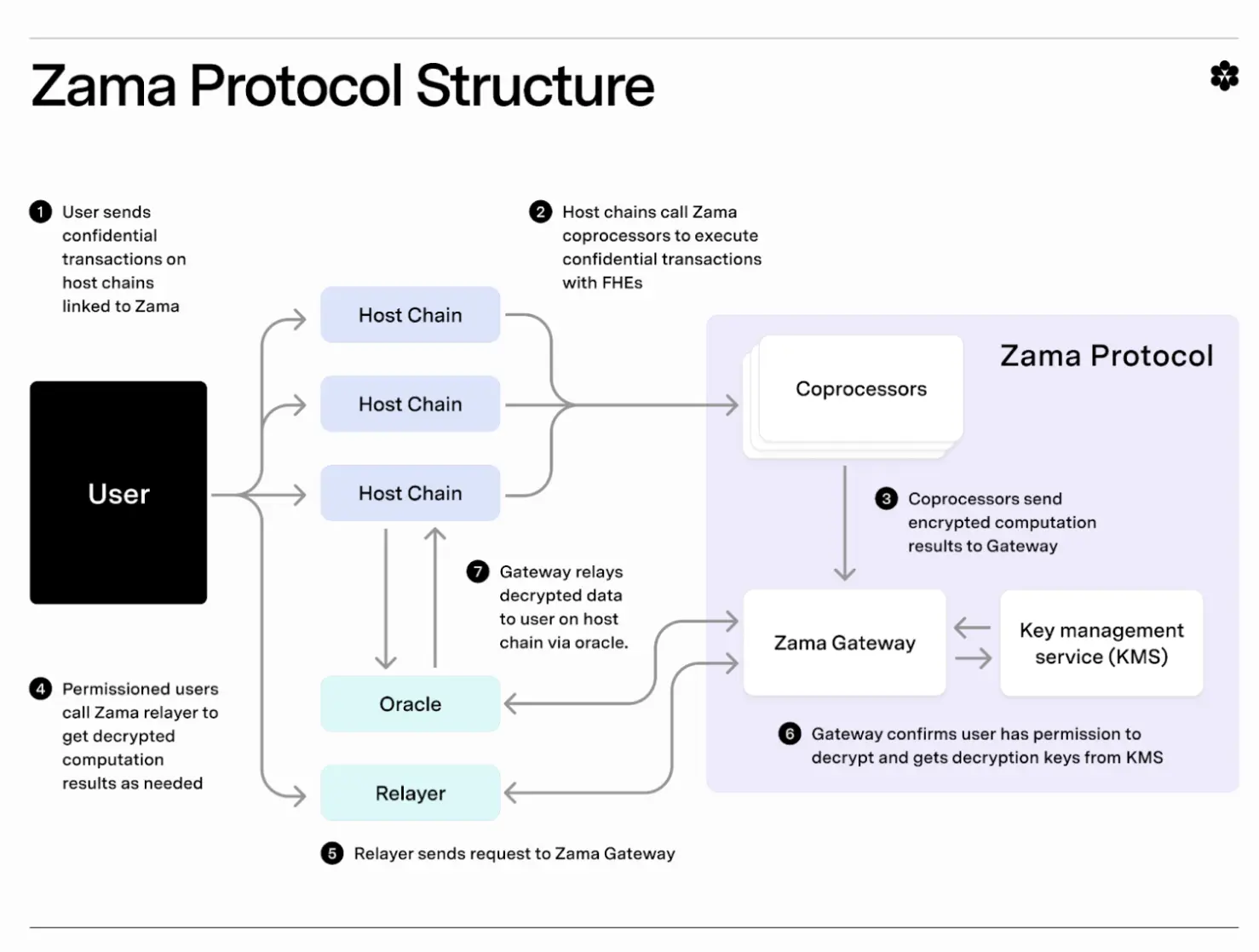

Zama, as a leading unicorn in this field, has made its fhEVM the standard for achieving “end-to-end encrypted computation.” FHE allows computers to perform mathematical operations on encrypted data without decrypting it, with results identical to plaintext operations upon decryption.

By 2025, Zama’s tech stack has achieved significant performance leaps: a 21x speedup for 20-layer Convolutional Neural Networks (CNNs) and a 14x speedup for 50-layer CNNs. This progress makes “privacy stablecoins” (where transaction amounts are encrypted externally but the protocol can still verify legitimacy) and “sealed-bid auctions” feasible on mainstream chains like Ethereum.

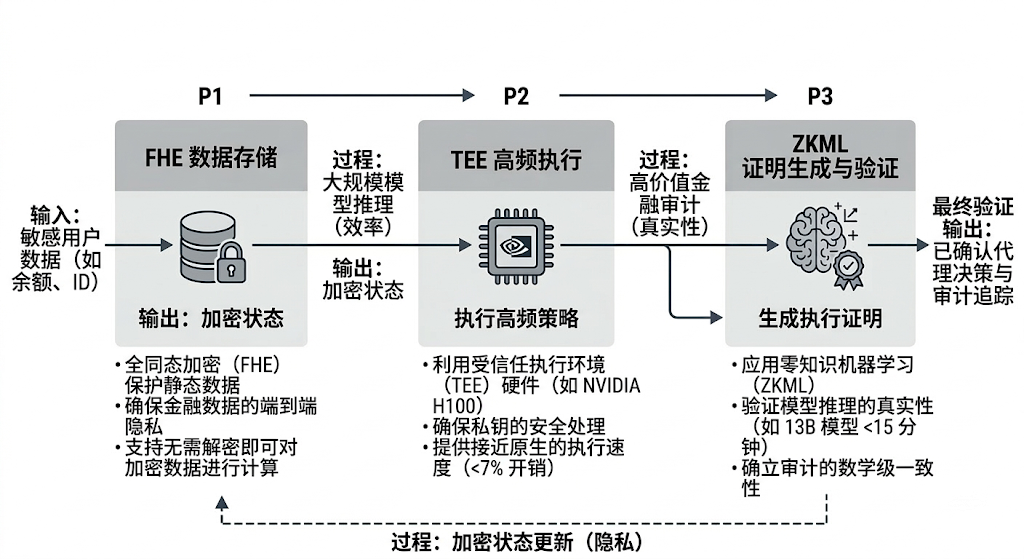

4.2 Verification Efficiency of ZKML and Its Integration with LLMs

Zero-Knowledge Machine Learning (ZKML) focuses on “verification” rather than “computation.” It allows one party to prove they correctly ran a complex neural network model without revealing input data or model weights. The latest zkLLM protocols can now perform end-to-end inference verification for 13-billion-parameter models, reducing proof generation time to under 15 minutes and proof size to just 200 KB. This technology is crucial for high-value financial audits and medical diagnostics.

4.3 Synergy Between TEE and GPU: The Power of Hopper H100

Compared to FHE and ZKML, TEE (Trusted Execution Environment) offers execution speeds close to native performance. NVIDIA’s H100 GPU introduces confidential computing capabilities, isolating memory through hardware-level firewalls, with inference overhead typically below 7%. Protocols like Ritual are heavily adopting GPU-based TEEs to support AI agent applications requiring low latency and high throughput.

Privacy computing technologies have officially transition from idealistic laboratory concepts into a new era of “production-grade industrialization.” Fully Homomorphic Encryption (FHE), Zero-Knowledge Machine Learning (ZKML), and Trusted Execution Environments (TEE) are no longer isolated technological tracks; they now collectively form a “modular confidentiality stack” for decentralized artificial intelligence.

This fusion is fundamentally rewriting the underlying logic of Web3, leading to the following three core conclusions:

- FHE is the “HTTPS” Underlying Standard for Web3: With unicorns like Zama boosting computational performance by tens of times, FHE is enabling a qualitative shift from “everything public” to “encrypted by default.” It solves the privacy challenge of on-chain state processing, bringing privacy stablecoins and fully MEV-resistant trading systems from theory to large-scale compliant applications.

- ZKML is the Mathematical Endpoint for Algorithmic Accountability: The “ZKML Singularity” arriving in the second half of 2025 marks a dramatic reduction in verification costs. By compressing inference proofs for 13-billion-parameter (13B) models to under 15 minutes, ZKML provides “mathematical-grade consistency” guarantees for high-value financial audits and credit ratings, ensuring AI is no longer an untrustworthy black box.

- TEE is the Performance Foundation for the Agent Economy: Compared to software solutions, hardware-based TEEs like those on NVIDIA H100 offer near-native execution speeds with overhead below 7%. It is currently the only economically viable solution capable of supporting hundreds of millions of AI Agents for 24/7 real-time decision-making, ensuring agents securely hold private keys and execute complex strategies within hardware-level firewalls.

The future technological trend is not about the victory of a single path but the widespread adoption of “Hybrid Confidential Computing.” In a complete AI business flow: TEE is used for large-scale, high-frequency model inference to ensure efficiency; critical nodes generate execution proofs via ZKML to ensure authenticity; and sensitive financial states (like account balances and private IDs) are encrypted and settled using FHE.

This “trinity” fusion is reshaping the crypto industry from a “transparent public ledger” into a “sovereign private intelligent system,” truly ushering in the era of an automated agent economy worth trillions of dollars.

5. Industry Security and Automated Auditing: AI as Web3’s “Immune System”

The cryptocurrency industry has long been plagued by massive losses due to smart contract vulnerabilities. The introduction of AI is changing this reactive defense posture, shifting it from expensive manual audits to real-time AI monitoring.

5.1 Innovation in Static and Dynamic Audit 道具s

Tools like Slither and Mythril have deeply integrated machine learning models by 2025, enabling them to scan Solidity contracts for reentrancy attacks, suicidal functions, or abnormal gas consumption in sub-second times. Furthermore, fuzzing tools like Foundry and Echidna utilize AI to generate extreme input data, probing deeply hidden logical vulnerabilities.

5.2 Real-Time Threat Prevention Systems

Beyond pre-deployment audits, real-time defense has also made significant progress. Systems like Guardrail’s Guards AI and CUBE3.AI can monitor all pending transactions (Mempool) across chains. Upon detecting signals of malicious attacks (such as governance attacks or oracle manipulation), they can automatically trigger contract pauses or intercept malicious transactions. This “active immunity” significantly reduces the risk of hacks for DeFi protocols.

A Practical Roadmap for Leveraging AI to Develop Crypto

In the future digital landscape, the fusion of AI and Crypto is no longer a technological experiment but a profound revolution concerning “production efficiency” and “wealth distribution rights.” This combination not only gives AI an independent “wallet” to manage but also endows Crypto with an autonomous “brain” to think, jointly opening the era of an autonomous agent economy worth trillions of dollars.

The following outlines the core benefits and practical maps of this fusion at the enterprise and individual levels:

1. Enterprise Level: From “Cost Reduction and Efficiency Improvement” to “Business Boundary Expansion”

For enterprises, the convergence of AI and Crypto primarily addresses the structural contradiction between high compute costs, fragile system security, and data privacy protection.

- Drastic Reduction in Infrastructure Costs (DePIN Effect): Leveraging distributed compute networks (like Akash or Render), enterprises are no longer constrained by expensive NVIDIA H100 cluster procurement. Measured data shows that renting globally idle GPUs can reduce costs by 39% to 86% compared to traditional cloud service providers. This “compute freedom” allows even startups to afford fine-tuning and training of ultra-large-scale models.

- Automation and Cost Reduction of Security Barriers: Traditional contract audits are lengthy and expensive. Now, by deploying AI security agents powered by neural networks, like AuditAgent, enterprises can achieve “s

この記事はインターネットから得たものです。 Intelligent Computing Convergence: The Deep Integration Architecture, Paradigm Evolution, and Application Landscape of AI and the Cryptocurrency Industry

Related: ETF Outflows $4.5 Billion: Will BTC Drop Another 30% in the Next 3 Months?

The article cross-validates using three sets of data from Glassnode, Santiment, and CryptoQuant, proposing three future scenarios, providing a suitable reference framework for judging BTC’s current trajectory. The full text is as follows: Bitcoin’s network activity has been weakening for six consecutive months, but this trend is not reflected in the core metrics that many traders first look at. The clearer signal is not trading volume—which has remained largely stable—but the breadth of participation. Even as the network continues to process a similar number of transactions, the number of active on-chain addresses has been steadily declining. In a market where price discovery increasingly occurs on ETFs and derivatives, this split is crucial. It means: Bitcoin’s on-chain footprint is narrowing, while market exposure remains active elsewhere. As the bear market persists,…